Recently, I shared my predictions for how AI will change the learning process. In short, I expect AI to reduce the need for many skills, potentially disempowering the average user.

Just as the invention of writing made us worse at remembering things and the invention of the calculator made us worse at mental math, having a technology that can read emails, write code, and do homework for us will make most of us less proficient at doing those things.

But I think the average case here would hide too much variation. Many people will learn and think less, but some will learn a lot.

I’ve been experimenting a lot with AI in my own research and learning, and the results have been all over the place. For some aspects of learning, AI works brilliantly, saving me hours of unnecessary effort. But in other cases the results are mediocre, and in some cases they are downright misleading.

Here are some of my tips about using AI as a tool to accelerate learning:

1. Choose Who Books to read (but you still need to read them).

AI is a great tool for book recommendations. I used it extensively in my recent Foundation project, often using ChatGPT and other tools to recommend books based on fairly specific criteria to complete my reading lists.

But while AI can give some great recommendations about what to read, AI summaries of those books aren’t a good substitute for reading the books themselves. Some of this is a problem of verifiability (more on that in a moment), but that’s a problem with summaries in general – you don’t learn ideas by reading them in depth. Only by reading a book in its entirety can you really learn and understand the examples, knowledge base, and authorial perspective that allows you to use that information to reason about other things.

AI can help you figure out if a book isn’t worth your time so you can read from a tightly curated list that matches your interests, ability level, and knowledge gaps.

2. Source alternative suggestions (but do your own thinking).

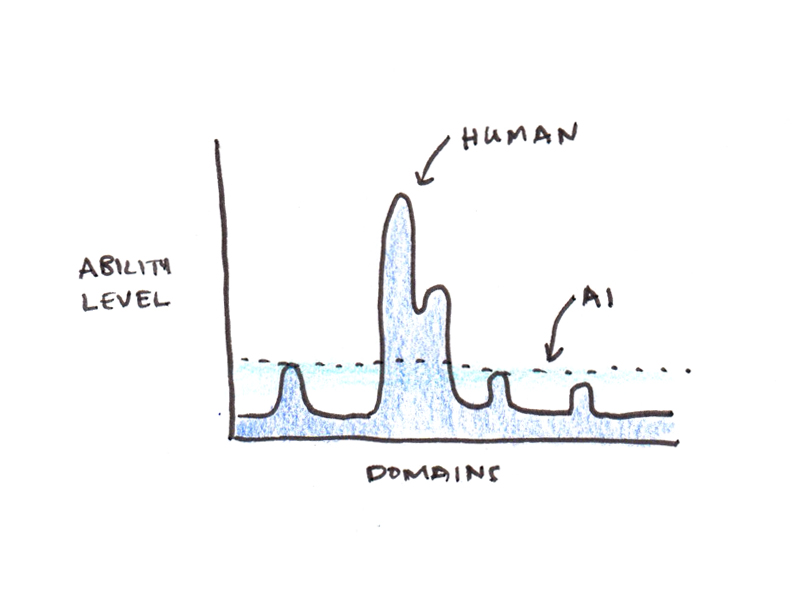

A common theme in responses to my recent essays has been that A.I. seem Being great at things you’re not an expert in, but whenever you’re highly competent in an area, makes AI advice sound pretty bad. Like an AI-version of Gell-Mann amnesia,

For example, I find AI to be a lousy ghostwriter. Even for small things where I have no ethical qualms about using AI, such as generating paragraph-level summaries of texts I write for a course, I have found AI summaries to be substandard and have ultimately had to write them myself.

Similarly, I’ve found AI to be useless in giving me business advice, creating flashcards, or designing curriculum for the subjects I want to learn. Some of this may be a skill issue on my part, or a lack of good signals, but the fact that I can generally achieve satisfactory results in other domains makes me think that the problem is simply that my standards are too high here.

But even if AI isn’t helpful in the things you know best, its pervasiveness is incredible. Often what AI does best is expose you to breadth of knowledge outside your area of expertise, offering suggestions you might not have heard before.

When using AI to help with problem solving, I found it helpful to ask the AI to suggest alternative ways to approach the problem that I might not have considered. This often opens up avenues of solutions that I would never have discovered on my own.

3. Demand verifiable answers (and fact-check important information) when accuracy matters.

Everyone makes a big deal about AI hallucinations. I agree that they are a problem, but all information sources have factual inaccuracies, so the problem is not limited to AI. When I was researching my last book, I was amazed at how often even peer-reviewed papers misquoted something.

I think the real problem with hallucinations is not the error rate, but that the pattern of errors is very inhuman. Generally speaking, when you write something that looks like a well-researched essay, it’s less likely to contain factual mistakes than a casual comment on a podcast. The AI breaks these conventions because it is just as likely to hallucinate when it is writing in a careful style as when it appears to be messing up.

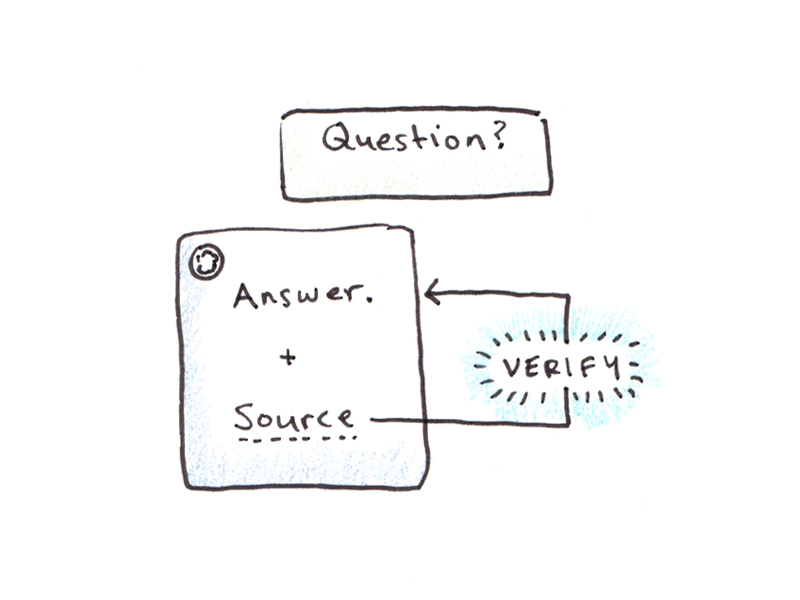

There is a simple solution to this problem: ask questions in a way that makes them verifiable. Example:

- Don’t ask for a quote, ask for a source for the quote (so you can check the original).

- Ask for links to the original papers, not just summaries.

- Ask for the code, not just the output of the analysis.

Some other tips include asking the AI to double-check a response (which often triggers “argument” mode and may catch some hallucinations) and putting the source documents into the chat window and asking the AI about locations in the documents to save you time verifying the information (Google’s) notebooklm It is useful for this).

4. Create scaffolding first when practicing a skill (don’t just ask to “study”).

A major weakness in my previous ultralearning projects was the lack of good study material. Some? MIT’s open classes They had problems galore with solutions, others had very few. Some languages had excellent resources, while others had almost nothing. There can be a night-and-day difference in how easy it is to master a new skill.

Finding good practice material in AI has the power to fix many of these problems, even if it creates new pitfalls.

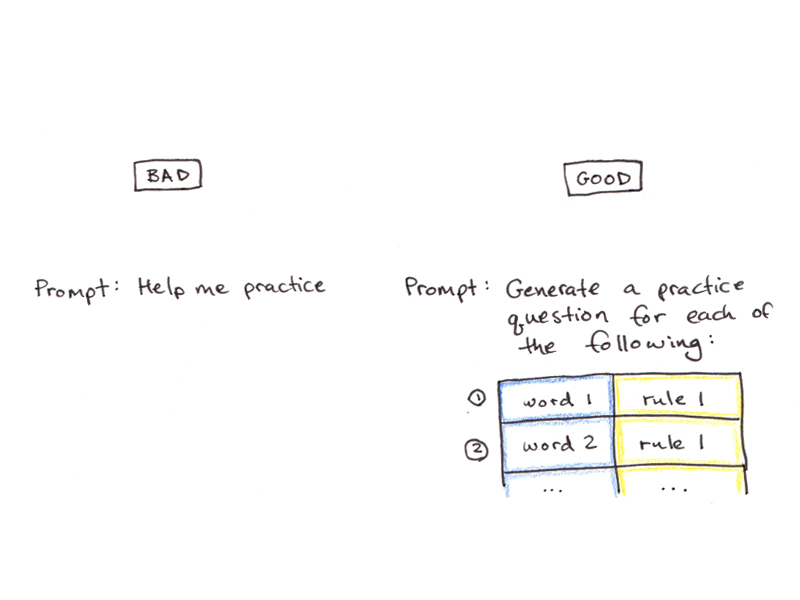

For example, one of my early attempts at using AI for learning was to have ChatGPT practice Macedonian grammar for me. This was a real help as there are very few resources for learning Macedonian, and grammar can be a major key point to learn.

Overall, the AI signals worked great, and I was getting good feedback on things like using them properly. clitics and conjugate verbs. But after about an hour of practice the AI would get into a “loop”, where the sentence patterns would focus on certain variations, and I would practice the same thing over and over again.

One solution to this problem that I have found is to build some scaffolding. In the Macedonian case, coming up with a curriculum that included a list of words, grammar patterns to practice, and various contextual modifiers, and then prompting the AI to deliver exercises following those different structures helped avoid the “looping” problem.

I haven’t done any math-heavy projects since Generative AI came out, but my strategy will be similar to those in technical areas. Don’t just ask AI to help you study math, but get a list of the types of problems you want to learn, and ask it to make changes to those problems. Thus, AI can help you practice deep techniques with various surface-level differences.

5. Use AI as a tutor (not a teacher).

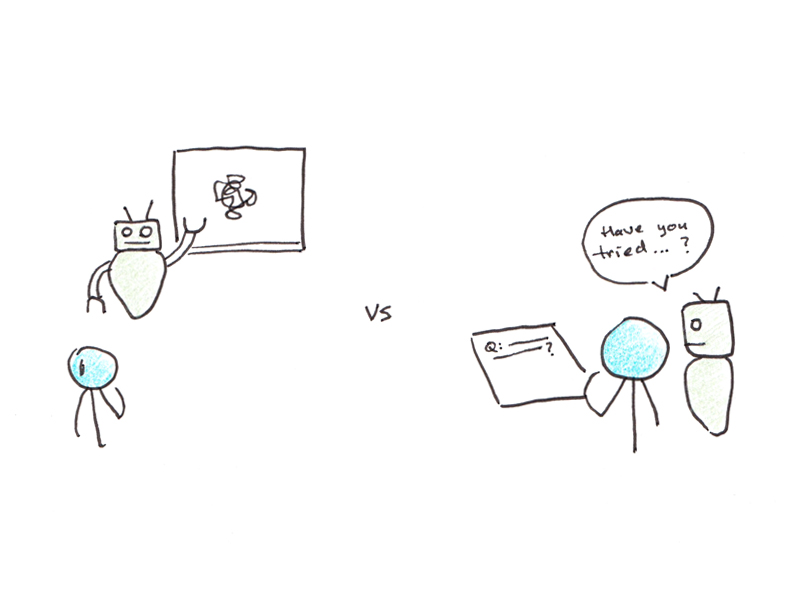

Based on several reader recommendations, I was initially excited by the idea of using AI to help with curriculum design. This initial stage in self-directed learning projects is often one of the most difficult – you are tasked with designing a learning project when you know very little about the subject you are trying to learn.

However, I’ve found that the AI is really bad at this. The problem is not so much that AI cannot design a curriculum for a subject, but rather that it lacks an understanding of the student’s level and how to prioritize teaching the relevant concepts.

For example, I thought of a course on transformer architecture This would be right in the LLM’s wheelhouse – it’s usually quite fluent on AI related topics, perhaps due to the plethora of lecturers online. But the result turned out to be a mess. It wanted to delve deeper into the latest cutting-edge optimizations before explaining the basics like attention mechanisms. Despite hours of fiddling with the prompts, I couldn’t produce anything that came close to the average-ranking explanation I could find by searching online.

As a result, I generally avoid “teach me this topic” questions. At the moment, those requests appear to be better fulfilled by real people, perhaps because they have a better mental model of instructional sequencing.

However, if you give very clear questions, the fact that AI can (generally) give useful advice is quite helpful. Whenever I’m confused or have a question that the author doesn’t answer, I often jump back and forth between ChatGPT and the book I’m reading. AI teaching seems to work better than AI teaching because the way you naturally prompt the AI with specific questions narrows the answers enough that it’s more likely to give you what you want.

Bonus: Vibe coding an app to solve your problem (but don’t reinvent the wheel)

Most of the time I’m using the AI, the simple chat interface works well enough. However, for some learning tasks where you want to repeat the same process over and over again, creating an application to do the task for you may be more consistent than prompting over and over again.

I’ve created some helpful utilities for learning, like a tool that takes a Chinese YouTube video as input, transcribes it if subtitles are not available, extracts key words and their definitions, and gives a summary in English; The second reference is to help with painting by breaking down the photo into darks/lights/midtones. Recently, I created some custom Anki flashcards for Macedonian with text-to-speech audio and sentence variations.

My advice for creating a quick and dirty app like this to solve a personal learning problem is:

- Ask the AI to write the whole thing as an HTML file using JavaScript. Although this isn’t best practice for actual apps, it means you can simply download the file to your desktop and run it in a browser.

- While only a text prompt can work, drawing your interface on a piece of paper and taking a picture of it can helpAs can give concrete examples of similar software/applications.

- If your app idea is complex enough, ask the AI to create specs first, and then ask it to build on those specs. Strangely, this seems to work better than just building the app all at once.

- Get an API key so you can interrogate the AI within your app. If you’re not sure which one to use or how to do it, ask the AI when building an app. I used it for the Chinese Video Assistant utility, as the AI was doing the behind-the-scenes work of transliterating, translating, identifying key words, and summarizing into English.

Even though vibe coding is much faster than coding your own app from scratch (even if you know how), it’s still slower than using something off the shelf. Before you start creating something, ask your AI if something similar to what you’re looking for already exists. Bespoke solutions are probably best saved for unusual problems or when you have individual specifications that are not well represented on the market.

How are you using AI to aid your learning efforts? Share with me in the comments some of the ways you’re using AI to learn about new things and deepen your skills.